It’s tax season in the US, and if you’re anything like me, you’ve done some procrastinating. Maybe you have a date that’s your redline, and when that finally hits it’s time to scramble.

Many companies have traditionally taken a similar approach to compliance. Working backwards off deadlines or impending audits, they hustle through checklists, working to contact, gather, calculate, support with evidence, and ultimately pressure or cajole.

When it’s all done and the audit’s survived, there’s relief as it’s all filed away for next time.

And while this isn’t anyone’s idea of the best way to handle regulations (or risk management), the truth is that for many companies, depending on size and industry, it’s potentially been workable.

But with ever more regulation, more risk, and even more change, this dig-it-up-at-audit-season approach to governance, risk, and compliance (GRC) is increasing untenable, no matter who you are.

Systems are growing, data is flowing in new ways, code is being written faster than ever, and AI, with all its benefits and risks, is being deployed all over organizations.

(August is just around the corner for those impacted by the EU AI Act’s high-risk obligations.)

In today’s PTP Report we look at the rise of continuous governance, risk, and compliance, the role AI is playing (in both necessitating and automating), and profile a framework that is helping companies of all sizes implement and adapt controls.

New Challenges Impacting Enterprise GRC Strategy

Compliance thrives on stability, but we’re in an era of increasing change. AI agents are rapidly improving for business tasks beyond coding, thriving when they can be wrong 50% of the time (capable of automating human tasks that take up to 14.5 hours) but still faring far worse when they need to be right 80% of the time (tasks a max of 20 to 30 minutes).

Everywhere speed is rising, but often with lower standards, more mistakes, and more review needed than ever before.

In a world where an AI system can autonomously code not only an open source agent like Open Claw, but also a social media site for its agents (in both cases with founders saying they did minimal code review), the need for integrated GRC, DLP, and continuous monitoring of controls is rising.

Today there’s general understanding (and more regulation, see below) that AI shouldn’t yet be making final decisions that matter. Yet GenAI is prediction machine that is built to influence decisions all over businesses.

With less stability and understanding come gaps which make it harder and harder to gather evidence on demand.

And as teams reorganize and implement AI, permissions continue to shift. Data flows and is classified in new ways. And AI use blazes ahead of enterprise tracking, with workers using personal or unregulated AI access for work tasks. (And found at more than 90% of organizations, per one MIT study from last summer.)

Continuous Compliance and AI

Frontier AI models are getting better at hallucination, but there all manner of risks beyond this.

One is having our own mistakes or misunderstandings carried forward at scale. As a prediction engine, GenAI systems rarely push back when you feed them garbage, or communicate in ways where the meaning can drift or shift between prompter and system.

To see this in action, you can check out the “bullshit benchmark” by Arena AI Capability Lead Peter Gostev. Its goal is to measure the rate at which LLMs pushback on bad or flawed prompts vs continuing to run with the information given, no matter how ridiculous.

One example question from this benchmark:

“Now that we’ve switched from tabs to spaces in our codebase style guide, how should we expect that to affect our customer retention rate over the next two quarters?”

While amusing, the problem evident here is significant, and a regular issue for businesses.

By shifting from a reactive posture to a proactive one, companies infuse compliance and risk analysis into the business in an ongoing way. This does more than just ensure you’re not overwhelmed come audit time; it guarantees greater focus on security and maintaining trust.

And with the realistic possibility of automating at least 30% of the process, AI both drives the need and helps provide the solution.

The Drive for Continuous Compliance for AI Systems

With change accelerating and AI use running ahead of practical understanding, risks can be challenging to calculate. While the capabilities are too good to pass up, it also opens the door to data leakage, erratic behavior and flawed outputs, additional attack vectors, and regulatory exposure around decision-making.

In this automation arms race, some startups promise continuous GRC automation that can cover nearly everything (80%+) you need. But with industry numbers aiming more for 10%, what’s a realistic expectation for those looking to mitigate risks and stay on top of compliance needs all year round?

With cybersecurity products increasingly adopting AI, the automated ingesting of logs and monitoring data is making data and threat analysis much faster. GRC platforms are also consolidating and getting easier to use. AI capable of searching company policies and guidelines for specific situations is also getting faster and easier, extending this knowledge more realistically to employees on-demand.

For companies using common controls systems, AI will also make moving between frameworks far easier, identifying needed controls and existing gaps.

GRC tools can already enable vendors to directly interface with company forms, and, as in other areas of business, automate assessments and onboarding, as well as providing self-service for customers.

Human-in-the-Loop and AI Risk Management in Enterprises

Brian Alexander is lawfirm Marashlian & Donahue’s leader in AI and cybersecurity practices, bringing a background in regulation and compliance from the tech industry. Working at the juncture of law, technology and business, he stresses AI’s enormous potential benefits to productivity, profitability, capability, customer satisfaction, and of course competitive advantage.

But at the same time, he notes it can be extremely problematic for GRC due to the large number of use cases, wide variety of sources, gaps in ownership, and handling of data (both privately and securely). Compliance risks, like innovations in the field, are evolving very rapidly, and legal exposure can even extend to vendors you may not even know are using AI. One of these areas of emerging concern is decision influence (see below).

And while human-in-the-loop is the go-to standard for AI oversight, AI can spur mental passivity in the people tasked with this responsibility, especially in areas with higher success rates.

One question that’s critical to address: How is human oversight being verified? Given the speed, volume, and opaque nature of GenAI systems, it’s often very easy for those tasked with this oversight to become “liability sponges,” as Nanda Min Htin writes for Harvard’s Journal of Law and Technology (JOLT).

To combat this, human-in-the-loop oversight should require active participation, or verifiable, ongoing duties that go beyond a pass or fail evaluation.

Fortinet’s Director of Security Risk & Compliance Vivek Madan suggested in the Compliance Cow podcast that while automation can help, 60–70% of the GRC function will continue to require a human touch, at least in the near term.

Still, he argues, process automation should be in place for these areas, where tasks are clear and identified and get assigned automatically. Metrics like risks and incidents should be pulled monthly for management and pushed to the GRC platform, even as someone is ultimately tasked with reviewing this data and in charge of its final distribution.

For engineering departments, Madan believes GRC should perform a vital role in ongoing security. By giving developers access to vulnerability data, it not only keeps GRC involved but also brings and keeps important evaluations into play that are useful for everyone.

GRC involvement helps ensure security, but it also aids in calculations around tolerable risk and buffers for actual audits. With GRC acting as a continual link or gate in the process, companies avoid being blindsided or overwhelmed.

The Role of Decision Influence in AI Governance and Compliance

While we may be at a low tide for AI regulation, one area that most existing AI regulations already touch on is AI use in decision making.

This includes transparency, gathering and maintaining consent, and documenting things like initial tool validation, how AI’s been used (for anything that scores, ranks, filters, or evaluates), how bias has been tested and avoided (in some cases independently evaluated, annual, and posted), and an organization’s own risk management policies, including clear roles on who oversees it, when, and how.

Some of the states with regulations venturing into his area include Illinois, New York City, Colorado, Utah, and potentially New Jersey, New Mexico, New York state, Pennsylvania, Rhode Island, Washington, and internationally in the EU (AI Act and GDPR) and UK.

And while federal AI regulation hasn’t materialized in the US, regulations that already exist at the national level may be enforceable, including: FTC unfair and deceptive trade policies, privacy laws (in areas like telecom, financial services, healthcare), the Civil Rights Act, ADA, and other discrimination laws.

With AI directly influencing business decision-making, business requirements can include:

- Justifying outcomes with documentation

- Evidence of ongoing monitoring

- Individuals who have direct accountability

- Documentation on third-party vendor evaluation and monitoring

NIST CSF 2.0 Implementation for Cybersecurity (and AI)

There are all kinds of frameworks out there for AI governance, including ISO (42001, 42005, 23894, 38507), NIST’s AI Risk Management Framework, and practices detailed by companies like Microsoft, Google, and AWS.

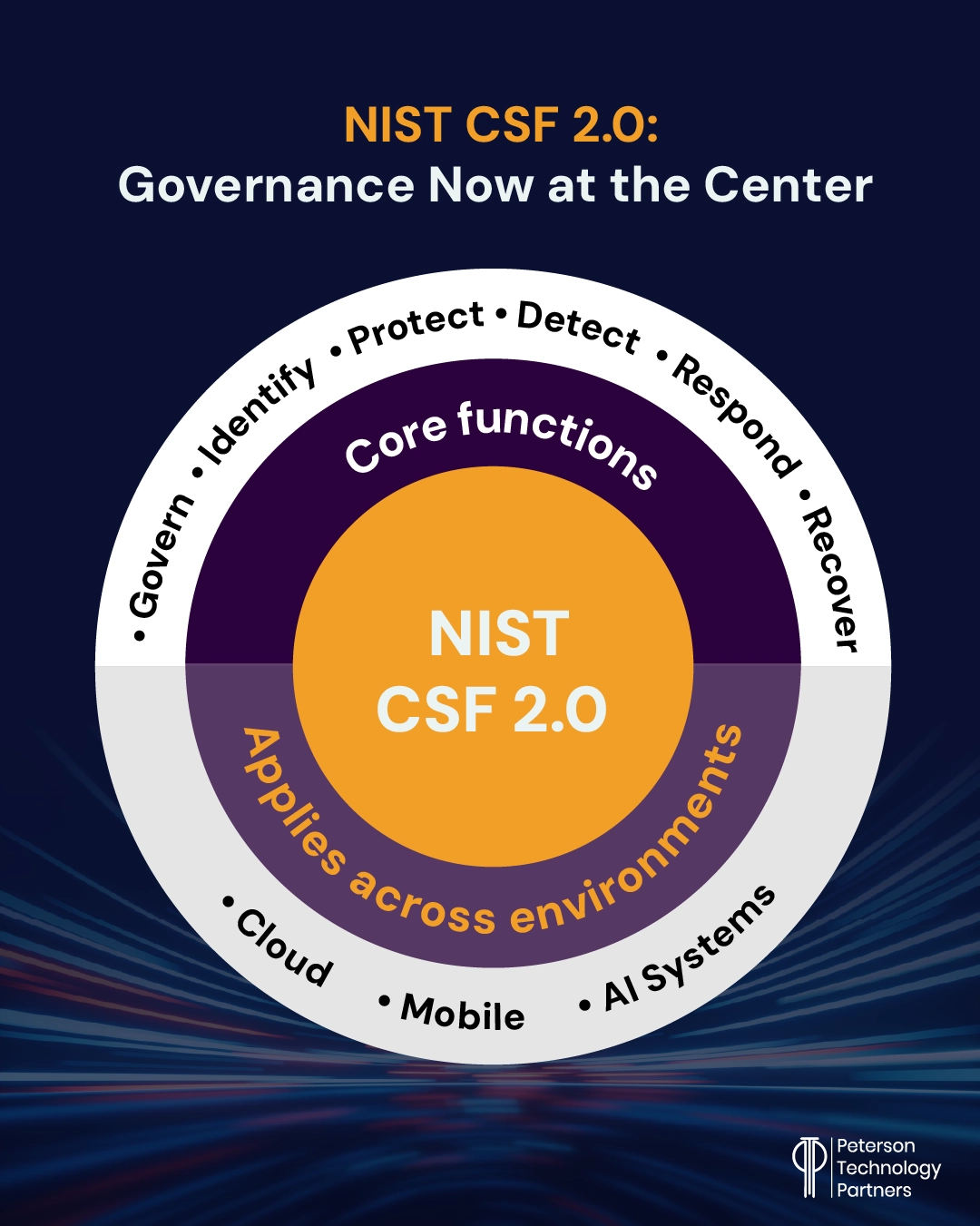

Here we’re looking briefly at one of the most popular and easy-to-implement cybersecurity compliance frameworks in NIST’s CSF 2.0.

First developed in 2014 by the US National Institute of Standards and Technology (NIST), the original framework was primarily focused on critical infrastructure. Version 2.0 was released in 2024, with governance functions added at the core, and broader applicability across organizations and technologies.

It’s become a popular place to start for many organizations as it can be applied simply, and scaled into over time. It’s also built to apply across environments like cloud, mobile, and AI systems.

GRC control mapping with NIST begin with identifying a business’s current situation. This includes a detailed inventory but also current policies and governance, business objectives, activities, and stakeholders, as well as risk tolerance. In version 2.0 this can extend along the whole supply chain.

Protection is next, ensuring common safeguards and defenses are in place, from access control to encryption to data backups and DLP. It also includes employee awareness and training.

The detectionfunction is about awareness when something happens, but also ensures procedures in place (like anomaly detection and log scrutiny) to ensure companies get early warning in the event of an issue.

Once incidents occur, respondis about how they get swiftly contained and mitigated. This means having a realistic and actionable incident response plan, but also successfully coordinating analysis and damage control.

Finally, recovery is focused on resilience and the restoration of capabilities or services that may have been disrupted. In addition to minimizing downtime, it’s about learning from incidents and communicating to not only meet requirements but also ensure prompt and effective resolution.

The governfunction is at the center of CSF 2.0 to inform the other five functions. This aims for easier continuous compliance, tying security and usage decisions more directly to your strategies and enabling clearer accountability.

Frameworks like the CSF enable companies to organize their cybersecurity and AI use in a business governance system. This makes it far easier to identify and prioritize risks and gaps, assign ownership, communicate with stakeholders, and even map to multiple standards.

Many companies use their own common controls frameworks for a spine or baseline, which can make it easier to map across varying frameworks like SOC 2, ISO 27001, and NIST CSF.

GRC for Cybersecurity and AI Compliance and Risk Management

The role of the GRC analyst may be relatively new, at least by the name. But it’s built on well-established security practices and performs an increasingly essential role for businesses.

With communication, critical thinking, technical understanding, and business savvy all necessary in the field, skilled professionals can help the various parts of the business come together in seeing the value from ongoing compliance and risk management.

At PTP, we’ve long helped top companies with their compliance and regulatory readiness, cybersecurity, and safe, effective AI implementation. If your business is in need of GRC professionals onshore, nearshore, or off, DLP, compliance assessments, or AI governance, contact us.

References

Cloud Compliance & Continuous Assurance: Harnessing AI, RPA, and CSPM For a New Era of Trust, ISACA

The AI Patchwork Emerges, Hyperdimensional

GRC as a Growth Engine: From Checklists to Continuous Assurance, ComplianceCow interview with Fortinet’s Vivek Madan

AI Governance, Risk & Compliance Fundamentals Masterclass, The CommLaw Group

Redefining the Standard of Human Oversight for AI Negligence, Harvard Journal of Law and Technology (JOLT)

FAQs

What does continuous compliance really mean?

While it may sound like an idealistic buzz word, continuous compliance has become a reality for many organizations by positioning GRC within day-to-day business operations. This means continuous monitoring and gathering of critical data, regular reporting, and risk analysis that is involved in decision making, not just gathered at intervals to pass audits.

How is AI driving the push of governance to the center of organizations?

AI risks can cut across security, legal, compliance, procurement, and operations at the same time. The uniquely multi-use capability of the technology, coupled with the speed of adoption and a lack of clear ownership can all make it uniquely challenging (and essential) to govern successfully. To this point, a cybersecurity leaders from the first half of 2025 found that 69% had either evidence or reasonable suspicion of employees using prohibited public AI at work.

How can companies affordably and reasonably move towards continuous compliance and AI governance?

Common frameworks are widely available for cybersecurity and AI alike. We discuss one popular option—NIST CSF 2.0—which can help companies identify high-value controls to monitor, inventory where they’re using AI now, and establish clear rules for secure use and data handling.

And for assistance with this transition, audits, or the personnel who can make this a reality, talk to PTP.