Has AI cheating gone mainstream?

Whether it’s using other devices or tabs for chatbot access, direct audio feed, or specialty cheating apps with overlays, there are more ways to cheat in an online interview than ever before.

And with AI as one of the hottest job skills, and AI use expected for almost every tech job, applicants may cry foul on being asked to even pretend to interview without it.

Add to this entirely fake candidates—using fake IDs or even AI deepfakes to get jobs under false pretenses (something Gartner predicts will account for one in every four applicants by 2028)—and it’s easy to see why so many are calling job interviews “broken.”

(For two good examples, see “Job Interviews Are Broken” from last October’s The Atlantic or Business Insider’s “Hiring for tech jobs is broken” from last April.)

We wouldn’t go quite that far at PTP, though we’ve written several times about these problems from the POV of a tech recruiting and consulting firm, and why (and how) they’re spiking now.

(For more on this, check out our PTP Report on deepfake hiring scams or read a detailed profile by PTP’s Founder and CEO on the surge in AI hiring issues.)

Today we’re focusing more on solutions than the problems. Specifically, we’re looking at the increase in cheating and fraud, and what we’re doing about it.

Why We’re Seeing More Interview Fraud and Cheating

98% of workers say they want to work remotely at least some of the time, and some 33 million Americans already do, according to Upwork. A substantial percentage of interviews are done online, with 79% of hiring managers using video technology in 2024 to conduct them.

The benefits can be enormous, including a rapidly accelerated process with far more convenience, and speed, for all parties.

But from the start, the process has also opened the door to new ways of cheating in technical interviews, for example, due to reduced visibility, or even undergoing the process (and even eventually working) under a false identity.

This trend has exploded with AI. While the World Economic Forum estimates 90% of employers use AI to filter or rank resumes, applicants, too, have jumped on board. As our banner stat points out, a majority of candidates by some surveys perceive using AI in interviews is now just part of the process.

Why?

The reasons are many, including:

- If a company expects employees to use it on the job, isn’t using it in the interview also reasonable? This argument has been accepted (somewhat) by some companies like Meta, which now allows AI in one of their coding rounds (including AI code verification as part of the evaluation).

- Because the companies themselves use it, some candidates feel it’s fair game.

- Many job seekers feel it is already an unfair process (92%, per Greenhouse).

- AI tools are free or cheap and easy to use, especially compared to missing out on potential wages.

- There are more applicants for fewer entry level jobs, with more than half of US job seekers overall spending six months or more searching per LinkedIn’s 2025 Workplace Confidence survey.

As trending videos on TikTok and YouTube dramatize, many believe it’s both extremely common and that they will never get caught.

A year ago, Blind found that one in five US professionals were secretly using AI in interviews, based on data from thousands of applications through its platform. CodeSignal in February 2026 found that cheating on technical assessments had doubled in just one year, from 16% to 35%.

Anthropic noted in January they’ve had to rewrite their own technical interview questions because so many candidates were cheating with Claude.

And with the average recruiter now handling more than twice as many applications as they did in 2021, and conducting some 40% more interviews per hire (per Gem’s Recruiting Benchmarks), the bandwidth for handling all of this is narrower than ever.

How to Prevent Fake Candidates and Screen for Cheating

So that’s the bad news.

But to adjust proactively to these trends, we’re taking advantage of candidate verification technology in hiring, combining AI, recruiter expertise, and our own rigorous process.

We already know this approach improves time-to-hire and cost-per-hire metrics, but we also believe it improves the experience for everyone involved, in an era Greenhouse’s chief executive Daniel Chait told the New York Times is like “the wild, wild West right now” for hiring.

Here we profile some of our automated techniques and AI hiring security solutions.

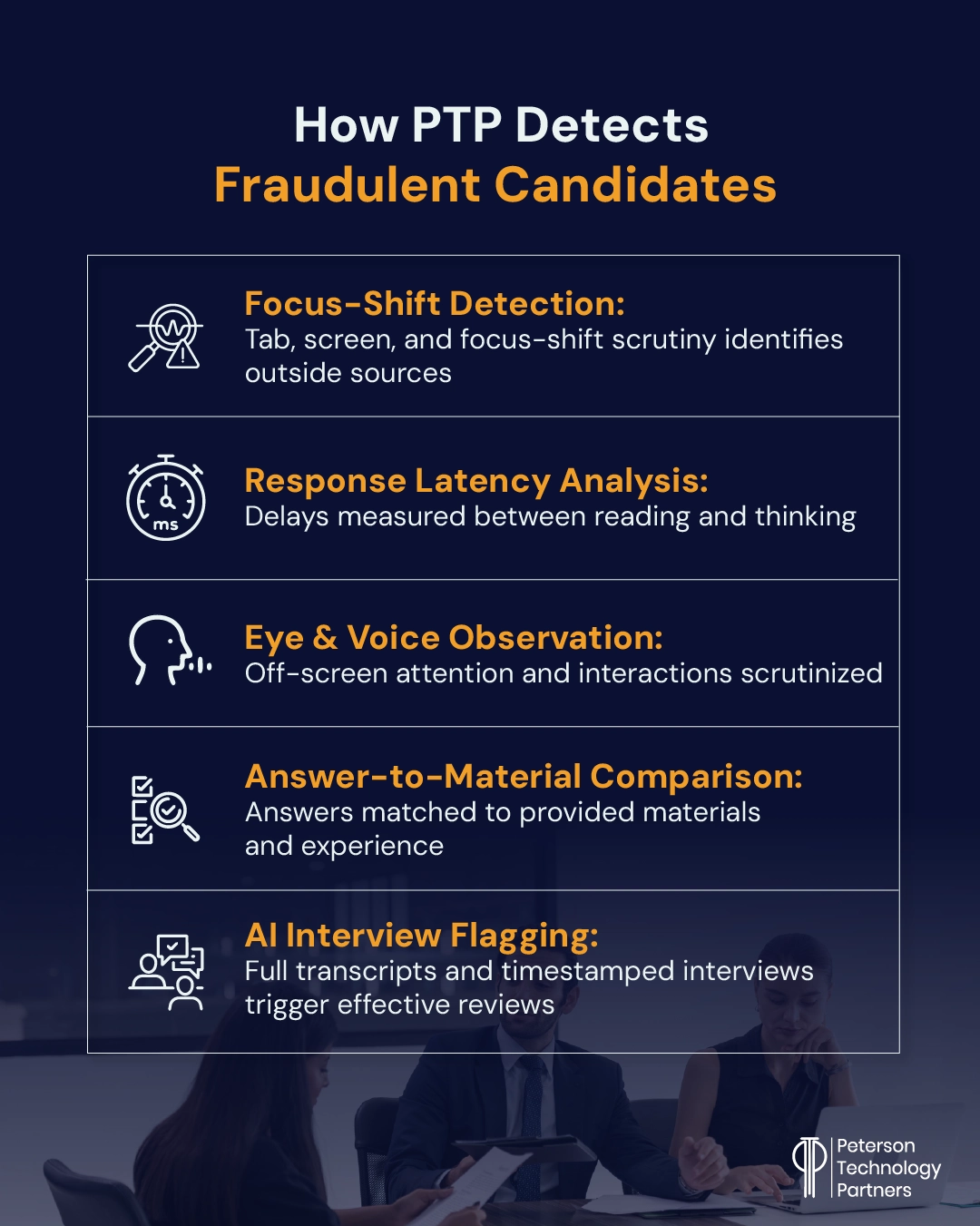

What Is Focus-Shift Detection and How Does It Work?

The most obvious way that people cheat is also one of the ways that can be easiest to catch: looking at another screen, tab, or browser. In our interviews, we don’t allow second screens but unfortunately have still flagged this being done, revealing tools in use like Cluely, Parakeet or AI chatbots.

Browser focus signals are another way to measure shifts in attention that move between an interview session and another tab, giving a clear indication when an interviewee has navigated away from a session in progress.

Viewing other screens or devices (such as phone propped up below) is not allowed, but when done it also requires unnatural eye movement (see below).

How Response Timing Is Part of AI Interview Cheating Detection

If we asked you right now how to detect fake candidates, most likely you would break eye contact and pause to think, at least momentarily. This is normal human behavior and deviations from it can indicate something strange is going on.

Response times also vary by question, almost by necessity, and almost never come with perfect structure.

When answers are given at steady intervals, either without sufficient pause or a consistent one, and arrive fluidly and with perfect structure, it’s one cue to our automated and human recruiters alike that something is amiss.

Remote Interview Cheating Detection Tools Look to Eye Movement and Voice

People in conversations break eye contact, of course, but not with a regular pattern nor with the concentrated focus it takes to read from another source.

This is why many cheating tools have added invisible overlays in a bid to cover this tell. Ironically, it can also be a clear trigger, as focus is required to read responses in a way that’s unique to thinking, formulating, and responding naturally.

Ever since remote interviews began, one concern has been offscreen assistance (same as with remote testing). This could include someone listening in and providing messages or even audio assistance.

In addition to eye movement, voice detection is important to ensure not only that just one person is giving a response, but also that it is actually a person and not an AI deepfake.

Answers Are Another Part of Fraudulent Job Candidates Screening

One of the best automated solutions to interview fraud is answer analysis. This means tying back to a candidate’s provided credentials, for example, but also considering the responses themselves for AI.

Our recruiting automation looks at format, structure, and word choice, but we also consider specificity and personal connection.

One of the best ways to identify applicant fraud is through responses related to resume material. Can they talk naturally about jobs they’ve had or places they’ve lived?

It might sound like a no-brainer, but that assumes the person you’re interviewing is actually who they claim to be.

While it is possible to steal identities and fabricate (or swipe) realistic employment and educational backgrounds, it’s far harder for a fake candidate (or AI deepfake) to naturally fake realistic personal experience with these things.

The Limits of AI Recruiter Fraud Detection: Flagging Issues for Review

Ultimately, our AI platforms may be most helpful in this arena—identifying potential issues for review while providing clear, specific records and systematic updates.

This limits false positives by recording evidence that is then given additional review. No system, human or AI, is going to be perfect, and our goal remains to pair great candidates with the right opportunities.

These methods are all geared to limit the amount of time recruiters have to waste dealing with nonsense and rote verification.

The goal is always for them to focus on getting to know people and moving the process forward both fairly and effectively.

Regulations and the Importance of Procedural Integrity

We often talk in our AI roundups about state and federal AI regulations, but in hiring, existing regulations are also highly relevant.

And while traditional oversight in this arena has tended to focus on outcomes and final decisions, AI automation is moving this focus upstream, with added scrutiny on decision influence, bias, and how AI errors are ultimately being measured and caught.

A class action lawsuit against Eightfold AI filed in January uses the Fair Credit Reporting Act in an attempt to challenge reporting violations (that all data has not been shared) instead of discrimination.

Joined with suits like one against Workday alleging discrimination, it’s clear that AI hiring practices must be as transparent, governed, and documented as well as possible.

All of this only increases the importance of procedural integrity, effective technological understanding and implementation, and quality partnerships.

At PTP, we have nearly three decades of experience providing outstanding technical recruiting and consulting solutions. If you’re looking for a partner to help you ensure integrity and quality coupled with the efficiency benefits of AI, contact us.

References

Top Remote Work Statistics And Trends, Forbes Advisor

Recruiters Use A.I. to Scan Résumés. Applicants Are Trying to Trick It., The New York Times

Meta Is Going to Let Job Candidates Use AI During Coding Tests, Wired

1 in 5 U.S. Professionals Secretly Use AI During Job Interviews, Blind Workplace Insights

Hiring for tech jobs is broken, Business Insider

The job market is so bad that ‘reverse recruiters’ are charging $1,500 a month just to help people look for jobs, Fortune

What the lawsuit against Eightfold signals for AI in recruiting, Staffing Industry Analysts

FAQs

Can AI detect cheating in interviews?

Yes and no. Today’s AI hiring automation is adept at flagging suspicious interview behavior, though, technically, it cannot reliably prove cheating on its own.

With more pressure on hiring transparency and well- governed AI solutions, it’s essential that companies effectively combine process, oversight, fine-tuned automation, and quality human oversight.

At PTP, we’re AI-first but have long perfected our hiring process integrity, combining the speed and efficiency of automation with skilled human recruitment.

What are the risks of AI cheating in interviews?

The most obvious risk of cheating in interviews is the impact on quality-of-hire. While some technical interview procedures have long been contentious with many candidates, the goal was to assess a candidate’s capacity to problem solve, learn, and think on their feet, something which AI cheating can circumvent.

It is also a potential security and compliance problem, with fraudulent candidates exposing companies to risks including data theft and cybercrime.

Can AI detect deepfake candidates?

Yes, but only sometimes. While effective at flagging potential issues, these solutions work best when part of a layered approach that includes skilled human review. As detailed in the article, AI cannot be a sole means for rejecting a candidate.

At PTP, our AI hiring automation helps our recruiters catch more than 95% of fraudulent candidates in the early evaluation phases.