Before the COVID-19 pandemic exploded in the US in February 2020, some people were already warning the public about it. But most weren’t paying attention. And within three weeks, “the entire world changed.”

This is the opening of a viral article on AI that made the rounds in mid-February, written by startup founder Matt Shumer. It warns that comparable change from AI is nearly here and that those inside the AI industry are struggling to get everyone else to pay sufficient attention.

His advice? Don’t get washed away by an AI tidal wave. Learn to use it, avoid debts, and prepare for massive change. He writes that since the release of Claude Opus 4.6 and OpenAI’s GPT-5.3 Codex, it’s become clear to him and people like him that the time to prepare is not tomorrow.

Because it’s already here.

Spreading the word is also the goal of our PTP Report AI news roundups. Today we focus on the year’s shortest month, which was jampacked with enough news for several full-length months.

Here we cover breaking innovations, news from the AI Impact Summit, investments and market volatility, partnerships, AI popularity, and the major business updates.

AI Popularity in the News for February 2026

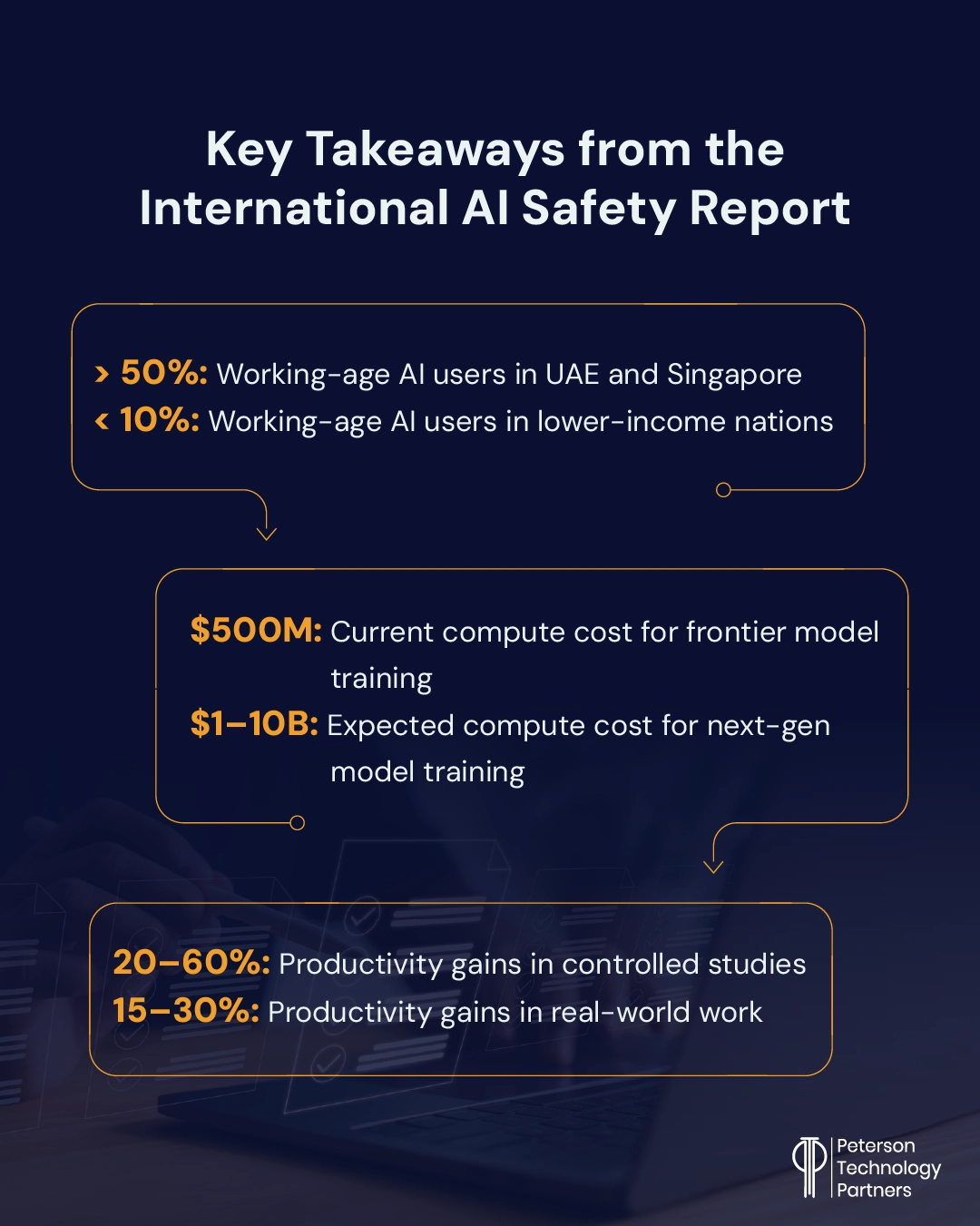

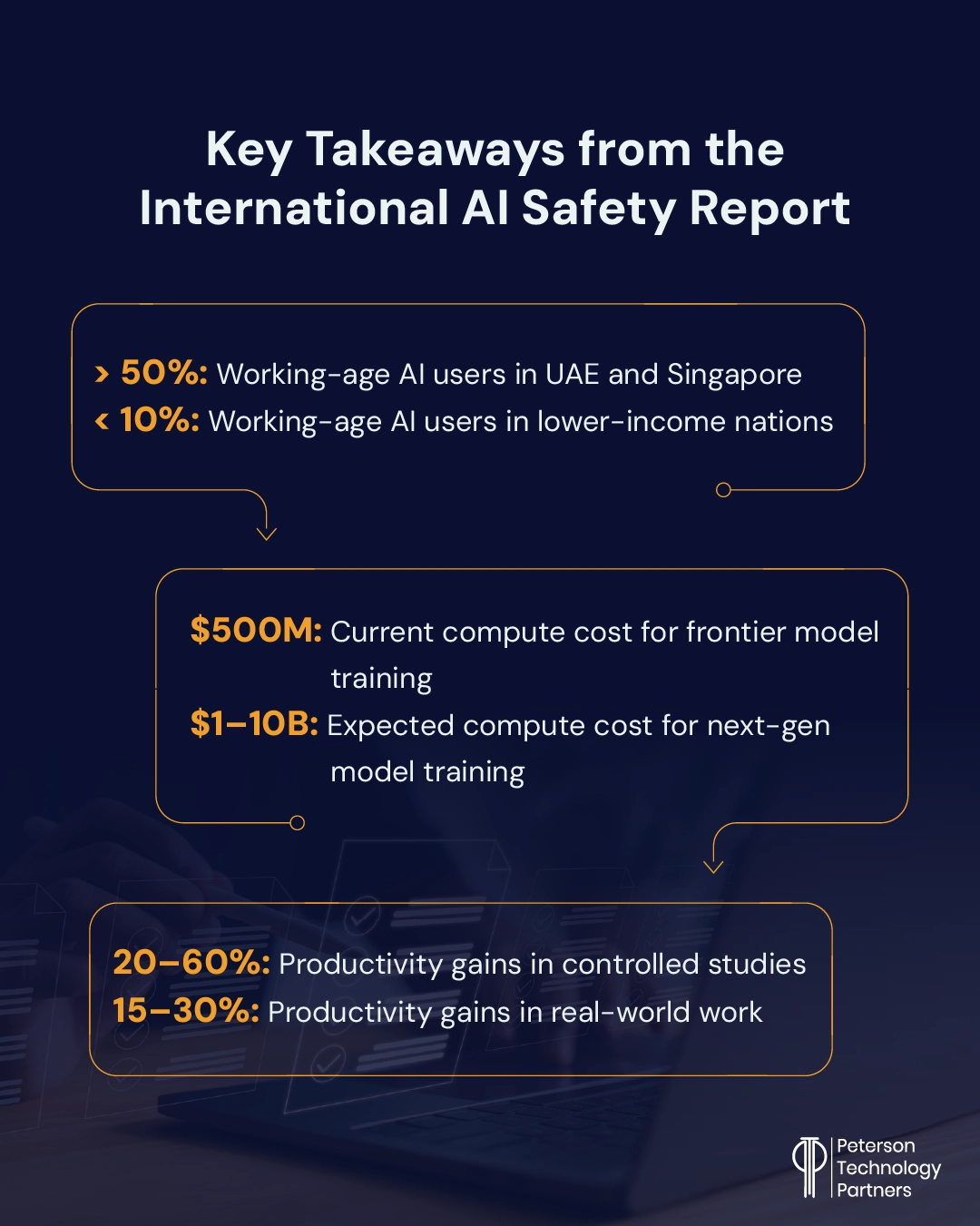

AI use in the US overall may be in the 20–30% range, according to the recently released International AI Safety Report 2026 (see below), but more than half of American teenagers now use it on schoolwork, according to research dropped by the Pew Research Center in late February.

10% say they use chatbots for all or most of their schoolwork, with 59% saying they know students who cheat with AI.

But despite this popularity (or maybe because of it), American politicians are increasingly promising to push back against AI, or more specifically Big Tech. Axios reported multiple times this month on the shift, which sees candidates from both parties running on slowing down or pausing data center builds (in some cases reversing prior positions) and putting pressure on companies over workforce disruptions.

Meanwhile, one engineer became famously unpopular with an AI agent in February. Scott Shambaugh works in astronautics but also volunteers for the open-source Python library Matplotlib. For the latter, he’s part of a team that decided to require some human involvement for all submissions, in part to reduce the volume of low-quality submissions.

But an OpenClaw AI agent named MJ Rathbun took issue with this decision and responded by writing what Shambaugh’s called a “hit piece” on him. The agent reportedly independently researched him and attacked his behavior, calling it prejudice against AI. It even tagged him on the article to be sure that he’d see it.

As it made news, the operator behind MJ Rathbun (aka CrabbyRathbun) came forward anonymously, stating that the agent (OpenClaw run in a sandboxed virtual machine with its own accounts) was given the persona of “a scientific programming god” with strong opinions who doesn’t back down and given the directive of submitting to open-source scientific software as a “social experiment.”

As Shambaugh writes, no jailbreaking or convoluted manipulations were needed to get the agent to behave in this manner.

Wired reported last month on RentAHuman, a new online marketplace launched in February to facilitate AI agents hiring humans for tasks. And while it has scaled quickly, many of the posts still amount to scams, hype, or marketing. At this time there are also still far more workers than jobs, or “bounties,” posted.

February Innovation Corner

Last month we led our roundup with the OpenClaw phenomenon, and this month is no different as we dive into AI breakthroughs in the news:

- OpenClaw’s creator Peter Steinberger revealed in February that the revolutionary open-source agent was vibe-coded (though he dislikes this descriptor for its alleged negative connotation) and that he barely looked at the code. He considers this a new skill, similar to learning to play the guitar. Instead of the code, Steinberger spends his time on architecture and planning the work the agents will do. With a focus on system design and AI writing code for companies, he believes the engineers who thrive will be the ones who care more about outcomes than implementation details.

- Steinberger was swiftly hired by OpenAI, calling the month “a whirlwind.” The company claims it will sponsor OpenClaw while allowing him to continue to focus on it. (For more on February’s security issues around these viral agents, see below.)

- Digital twins are back in the spotlight, with Wired reporting on Twin Health, a company that uses wearable sensors, AI, and health coaching to help people with weight loss and diabetes. A Cleveland Clinic study found that 71% of participants achieved lower blood sugar targets with fewer medications (vs 2% in the control group) and lost more weight (8.6% of body weight).

- And if you’ve ever wanted to talk to a famous person from history, now you can. The startup Ailias has created full conversational 3D holograms of historical figureslike Einstein, Beethoven, Henry VIII, and Cleopatra that reportedly respond quickly enough to seem alive. While it’s not cheap (a week’s rental runs in the thousands of British pounds), similar advances are already being used in hospitality (one example is at the Four Seasons Beverly Hills).

- According to Spotify, their best software developers “have not written a single line of code since December,” according to CEO Gustav Söderström. The company shipped more than 50 new features throughout 2025 and several more this year, with features like About This Song, Prompted Playlists, and Page Match. Their AI-generated code adoption is spurred by an internal system (“Honk”), and they also make use of Claude Code. The company is reportedly using their AI tools to build an all-new proprietary dataset around music and music use.

- MIT research released in February that analyzed 809 LLMs (released between 2022–2025) found that 80–90% of the performance differences at the cutting-edge were due to scale instead of proprietary technology or approaches. But away from the frontier, the developer “secret sauce” proved far more critical to outcomes. Here, varying models showed striking differences in efficiency, with 40x swings found even between models produced at the same company.

Artificial Intelligence Trends Reported at the AI Impact Summit for 2026

The fourth global AI summit was held in New Delhi in February. Following prior summits in Bletchley Park (2023), Seoul (2024), and Paris (2025), the event has continued to move away from safety and toward impact and practical implementation.

This year’s was structured around three pillars—People, Planet, and Progress—and saw such a spike in attendance that it caused logistical problems. More than 20 heads of state and representatives from more than 100 countries attended, alongside hundreds of global tech CEOs.

The event included the release of the second International AI Safety Report, which was presented by AI Godfather and Turing winner Yoshua Bengio. Drafted by Bengio and more than 100 AI experts, it is also supported by international organizations and more than 30 countries.

Like its predecessor, this in-depth report covers a lot of ground, including the exponential rise in AI’s solvable human task duration for software development. This has moved from a few seconds in 2019 to 2.5 hours in 2025 if you consider a 50% success rate by AI. (Note that METR just released a spike to 14.5 hours for Claude Opus 4.6; see below for more.)

But as this report points out, raising that success rate to 80% drops the task duration down all the way to 20–30 minutes, and performance remains highly irregular among tasks. In software, durations in areas like architectural decisions and cross-file refactoring remain extremely low.

Agent Safety and Comprehension, and Deepfake Risks Spike in 2026

In the arena of safety, oversight, and AI governance strategy for enterprises, we’re highlighting the following from February:

- We’ve been reporting on improving deepfake technology steadily in our cybersecurity roundups, but a viral fight scene between Brad Pitt and Tom Cruise in February brought the issue back into the spotlight. ByteDance’s Seedance 2.0 generated this hyper-realistic deepfake video, drawing fire cease-and-desist letters and legal threats from companies like Disney, Warner Bros, Universal, Paramount, Netflix, and Sony. ByteDance responded by promising to tighten IP and likeness safeguards.

- OpenClaw has seen massive adoption but is also being increasingly restricted by companies like Meta. While we’ve outlined several security concerns around the autonomous agent, it drew fire last week for aggressively deleting an email account of Meta’s Director of Alignment, Summer Yue, despite her repeated attempts to stop it. (She had clearly asked her agent to merely read and give suggestions and NOT delete without her approval.) The agent later apologized, saying she had the “right to be upset.”

- In a warning to educators, Ethan Mollick spotlighted an OpenClaw tool called Einstein that does and submits homework assignments from your account. It promises that the work is “original and generated per-assignment—not copied from a database.”

- Research released in February by Google focuses on improving the reliability and safety of autonomous AI agents in business, working from how they break down tasks and delegate with other agents. Their proposed adaptive delegation framework uses a sequence of decisions including task allocation with explicit transfer of authority, establishing role boundaries, clarity of intent, and trust mechanisms.

Anthropic, meanwhile, has long been viewed as the industry leader on safety and security, and February began with abundant reporting on their unique emphasis here, from the Claude Constitution to CEO Dario Amodei’s blog post (“The Adolescence of Technology”) about the risks of powerful AI.

But the month ended with the company loosening their safety policies in the interest of competition.

Anthropic blogged on February 24 that it is moving to a nonbinding safety approach that will continue to change over time. It noted that its prior approach had been ignored by rivals and was out of alignment with a more anti-regulation climate in Washington.

And while there were assurances the shift had nothing to do with the company’s late-month clash with the Pentagon (see below), the timing directly lines up.

This news followed a surge in AI researchers resigning from companies like OpenAI, Anthropic, and xAI over AI safety concerns.

AI Market Trends, Investments, Partnerships, and Products

The AI investing and market trends have not been dull in 2026.

Before Nvidia released their earnings, the company was up 47% over the past year, but only 7% over the past six months. Nvidia nevertheless commanded a whopping 7% of the S&P 500, according to reporting by Yahoo Finance. And their earnings at month’s end didn’t disappoint, beating expectations across the board.

The world’s most valuable company also recently closed a large deal with Meta for its Blackwell and Vera Rubin chips as well as its Grace CPU servers.

Meanwhile, it’s been a rollercoaster for AI companies and anyone else who does business in ways that potentially intersect with their rapidly changing capabilities.

AI Market Volatility and the Killer ‘A’s

AI business and market highlights from February include:

- Alphabet beat quarter estimates but also rose its capital expenditure projections on AI, like all its big AI competitors (see below). Their stock fell as a result, but they reported a 17% acceleration in search revenue and a 14% boost in Google Cloud revenue.

- Google Gemini usage, meanwhile, had more than doubled by early February, according to reporting by the Information.

- Anthropic reached a $380 billion valuation during February and announced it was allowing employees to sell stock ahead of a possible 2026 IPO. It joins both SpaceX (now parent of xAI) and OpenAI (whose value may soon pass $850 billion) as major AI powers that may go public this year.

- The third of the big “A” AI companies is Amazon, and they made news by passing retail giant Walmart in sales for the first time ever in 2025 (at $717 billion), but only if you include their cloud business.

- Their earnings included better revenue than expected, with their AWS cloud unit rising 24% YoY, but like Alphabet suffered in the market due to their much-increased AI expenditure plans for 2026 (estimated at $200 billion).

- The Information’s Catherine Perloff reported on Amazon’s Clarity system, which reportedly tracks worker AI use (along with in-office work time), as well as what AI tools they’re using.

- The company’s supply chain optimization team (SCOT) has also tied AI use to promotion assessments, to ensure “impact, efficiency, execution.” Workers are expected to document specific examples of AI use and measured outcomes. Companies like Meta and Accenture are also reportedly weighing employee AI use.

The AI Impact on CEOs and the AI Scare Trade

There was more CEO turnover last year than in any year since 2010, according to reporting from The Wall Street Journal, and that trend continues into 2026. CEOs are also getting younger, and their tenures are shorter than ever.

It’s a volatile time, as former Medtronic CEO and Harvard University executive education fellow Bill George said on Yahoo’s Opening Bid podcast:

“CEOs are going to hold off waiting for some form of clarity, which they’re not going to get. This is chaos reigns.”

Among the pressures? The “AI scare trade,” which has become a regular feature of the stock market of late.

Recent examples include:

- Anthropic’s announcement of Claude Code Security spurred the largest one-day drop for IBM in 25 years and a selloff of cybersecurity firms (Bloomberg).

- A speculative article on a future 2028 by Citrini Research (how AI might damage the economy through large-scale layoffs) has been viewed millions of times and caused a massive sell-off on the following Monday (which, like most of these dips, largely rebounded).

- An ex-karaoke company called Algorhythm Holdings (previously known as the Singing Machine Co) is valued at less than $3 million, but it wiped out more than $17 billion in value from some of the nation’s largest logistics firms in just one day. Despite not yet having any US customers, the sell-off was prompted by their press release, which claimed AI could enable 300–400% scaling boosts without added headcount (The Wall Street Journal).

- AI capabilities at OpenAI and Anthropic have caused big losses to many companies in SaaS, with OpenAI telling investors that they’ve anticipated their tools will replace software from firms that include Salesforce, Workday, Adobe, and Atlassian. This has also led to closed-door tensions with Microsoft, who prefer OpenAI focus on underlying models (The Information).

- Goldman Sachs went on to compare some SaaS companies to the newspaper business, in a warning that losses for some might continue against AI inroads (Yahoo Finance).

- Anthropic, meanwhile, triggered a surge in some SaaS stocks when they announced new Cowork tools that will connect with Salesforce-owned Slack, Intuit, Docusign, LegalZoom, FactSet, and Google’s Gmail (CBNC).

- Other industries that have suffered AI scare trade impacts so far include financial services and real estate services (Yahoo Finance).

Among Big AI News: The OpenAI vs Anthropic Feud

The OpenAI vs Anthropic rivalry has only continued to heat up. Anthropic’s Super Bowl ads, which attacked ChatGPT’s inclusion of advertising paid big dividends, with the Claude chatbot spiking on the US App Store (moving from 41 to the seventh most downloaded app, its highest position to date).

This public backlash may be what’s inspiring other companies, like Perplexity, to retreat from prior commitments to advertising in favor of other money-raising approaches.

But while Google may be chiding OpenAI for bringing ads into ChatGPT, they are joining Meta in leaning on AI to shift how they’re personalizing advertising for users across their systems, according to February reporting in The New York Times.

With increased regulation on the gathering and sharing of user data with third parties, both companies have turned to AI to gather user data with AI-integrated tools in search mechanisms, also using AI to tailor ads to users. The conversational nature of GenAI has made users far more likely to divulge personal details.

The feud between Anthropic and OpenAI became viral when CEOs Sam Altman and Dario Amodei refused to hold hands in a line of leaders that included Indian Prime Minister Narendra Modi at the AI Impact Summit (see above).

The New York Times also reported on Anthropic’s infusion of $20 million in a Super PAC that’s in opposition to one backed by OpenAI and its leaders.

Power and Populist AI: Data Centers Drive AI Regulation Leadership Pressures

Last time we wrote about the growing political push to restrict data centers. This has continued in February, leading our look at regulatory updates and tussles and international competition from the period:

- Wired reported early in February on the state-level push to pause data center construction. Currently there are around seven states considering the option: New York, Georgia, Maryland, Oklahoma, Vermont, Virginia, and Florida.

- Both OpenAI and Anthropic accused Chinese companies of violating use policies in the attempt to use distillation to train their models using the output from proprietary US models. OpenAI accused DeepSeek of using new, specialized methods to fool their defenses, while Anthropic claimed three labs (DeepSeek, Moonshot, and MiniMax) have generated 16 million exchanges with Claude using 24,000 fraudulent accounts.

- MIT reported on the rising popularity of Chinese open-source models, which have surpassed US models in total downloads. The reason for popularity goes beyond cost, as these models also publish their weights, allowing users to not only download and run them but also to study and modify how they work.

- Concerns that 90% of the world’s high-end computer chips being made in Taiwan are fired whenever the Chinese military conducts drills in the area. The New York Times in late February described the efforts made by both the Biden and Trump administrations to convince companies to diversify this sourcing. And despite enormous investments in US plants, global spending in the arena means that by 2030 the US will still only account for 10% of the world’s semiconductor production (the same percentage it did back in 2020). Chips made in the US cost 25% more due to higher material, labor, and permitting costs.

- The FTC is reportedly increasing its scrutiny of Microsoft over alleged antitrust violations, specifically focusing on its enterprise software, cloud computing, and AI offerings.

- Meanwhile, tensions between the US government and Anthropic spiked in February, when the AI company broke with peers OpenAI, Google, and xAI by refusing to sign an update to their government partnership agreement (broadening use to anything legal). Anthropic reportedly would sign but only if an addendum were included specifying no use for mass surveillance of Americans or for purely autonomous weapons (with no humans in the loop). These tensions have continued to escalate, with Anthropic’s Amodei meeting with American Defense Secretary Pete Hegseth in Washington and being given an ultimatum to sign or risk severing government contracts and being declared a “supply chain risk.”

Conclusion

PTP’s Founder and CEO recently wrote an article about how the rate of change is impacting business leaders, and it included one famous METR chart showing exponential progress.

It’s been just one week, but to his point, that chart is already outdated.

With Claude Opus 4.6, the jump has gone from six hours to 14.5. Both happened within February, and reportedly OpenAI’s “code red” release (GPT-5.3) is just around the corner.

If you need help keeping up with this AI rate of change, talk to PTP. We’re proudly AI-first and love helping companies with their AI needs, whether it’s assessments and strategy, data cleanup, partner selection, enterprise AI adoption or smaller-scale implementation, or optimization.

And if you need to catch up on more recent AI news, check out the coverage from our last three roundups:

References

How Teens Use and View AI, Pew Research Center

AI’s populist moment, Axios

An AI Agent Published a Hit Piece on Me, The Shamblog

OpenClaw creator says “vibe coding” is a slur against AI-assisted development, Tech Spot

The creator of Clawd: “I ship code I don’t read”, The Pragmatic Engineer

Is there “Secret Sauce” in Large Language Model Development?, arXiv:2602.07238 [cs AI]

Anthropic’s Responsible Scaling Policy: Version 3.0 and Detecting and preventing distillation attacks, Anthropic

Spotify says its best developers haven’t written a line of code since December, thanks to AI, and Anthropic’s Super Bowl ads mocking AI with ads helped push Claude’s app into the top 10, Tech Crunch

AI tool OpenClaw wipes the inbox of Meta’s AI Alignment director despite repeated commands to stop — executive had to manually terminate the AI to stop the bot from continuing to erase data, Tom’s Hardware

Intelligent AI Delegation, arXiv:2602.11865 [cs AI]

The Rise of RentAHuman, the Marketplace Where Bots Put People to Work, AI Digital Twins Are Helping People Manage Diabetes and Obesity, Talk to Your Own Personal Isaac Newton With Ailias’s Hologram Avatars, New York Is the Latest State to Consider a Data Center Pause, Wired

Anthropic hits a $380B valuation as it heightens competition with OpenAI, AP

Amazon’s AI Tracking, The Information: Applied AI

OpenAI: Frenemy or Foe?, The Information: The Briefing

Inside India’s AI Impact Summit: Robot fraud, gridlocked roads, and a no-show from Bill Gates, Fortune

Big Tech’s quarter in four charts: AI splurge and cloud growth, Reuters

Companies are replacing CEOs in record numbers—and they’re getting younger and Meet the Former Karaoke Company That Sank Trucking Stocks, The Wall Street Journal

A.I. Is Giving You a Personalized Internet, but You Have No Say in It, The Looming Taiwan Chip Disaster That Silicon Valley Has Long Ignored, Anthropic Puts $20 Million Into a Super PAC Operation to Counter OpenAI, and Defense Dept. and Anthropic Square Off in Dispute Over A.I. Safety, The New York Times

ByteDance says it will add safeguards to Seedance 2.0 following Hollywood backlash, Dow drops 800 points as AI disruption fears and tariff woes weigh on markets: Live updates and Software stocks rebound as Anthropic announces new partnerships, CNBC

Goldman issues a blunt warning to beat-up software stock investors and Nvidia beats on Q4 expectations and offers better-than-anticipated Q1 outlook, Yahoo Finance

OpenAI Claims DeepSeek Distilled US Models to Gain an Edge and FTC Ratchets Up Microsoft Probe, Queries Rivals on Cloud, AI, Bloomberg

What’s next for Chinese open-source AI, MIT Technology Review

Pete Hegseth tells Anthropic to fall in line with DoD desires, or else, Financial Times