Mythos, Axios npm, Mercor, and TeamPCP are all among the latest cybersecurity news and trends from February to April 2026.

But we’re opening this edition of our bi-monthly roundup with hijacked robo-vacuums.

Specifically, 6,700 robot vacuum cleaners across 24 different countries. The Verge reported on how one hobbyist was attempting to control his own DJI Romo vacuum with a PS5 gamepad when he “accidentally” found he could control nearly 7,000 of them.

He could pilot them remotely and look and listen through live feeds. He demonstrated, too, by taking charge of one vac owned by a Verge reporter.

And while DJI has fixed this particular vulnerability, it’s just the latest demonstration of the gaps in cybersecurity that are stacking up in the real world. It also ties back to our previous edition, where we talked about the challenge of securing a rapidly growing surge of interconnected devices.

To read about that or catch up on the major cyber-attacks from early 2026, AI security risks, or breaking cybersecurity news, check out our last three roundups here:

About Those Bots…

In another callback to last time, remember all those record-breaking DDoS attacks and the gigantic botnets launching them?

In March, authorities from the US, Canada, and Germany announced they had dismantled four of the most massive botnets in a single initiative, breaking up Aisuru, Kimwolf, JackSkid, and Mossad.

Together, these four had infected some 3 million devices for use in for-hire DDoS attacks. And they’d already launched hundreds of thousands of attacks while demanding extortion payments, according to government statements.

Chad Seaman, a principal security researcher at Akamai, told Wired in March that one of the largest takeaways from those massive botnets was their ability to compromise devices usually deemed protected behind a home router.

“It really shook the foundations of what we considered to be a secure home network.”

He also noted that, while taking down these massive botnets is important, new clusters of compromised devices will rise to take their place in this ongoing cat-and-mouse game.

“You catch one mouse, and 10 others scurry under the refrigerator. The cats will prioritize the fat mice. But it’s a long game.”

Top Story in AI Security Vulnerabilities and Hacks for 2026 So Far: Mythos Is Dangerous

Anthropic released their latest AI model, Mythos, in early April.

Except that they didn’t.

It didn’t go public, nor release to paying customers, nor even in preview to the highest tier of subscribers.

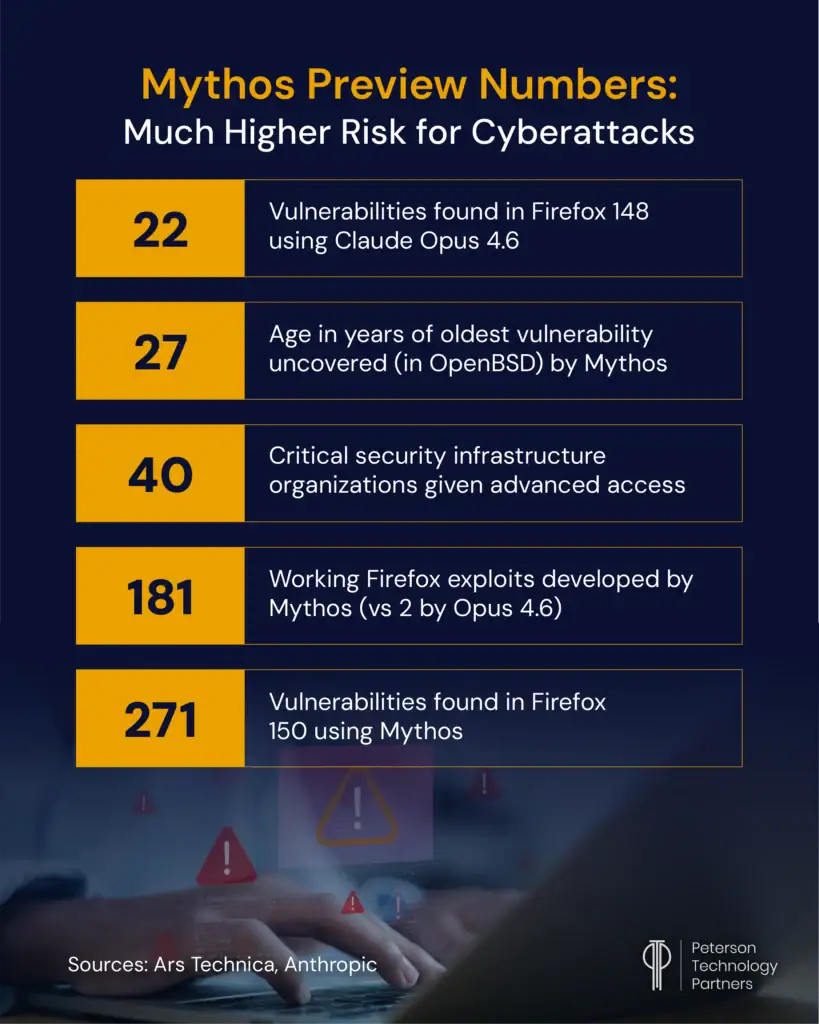

In a first since ChatGPT-2 in 2019, Anthropic deemed Mythos too dangerous for public release. Instead, the company announced that only 40 organizations involved in critical global infrastructure were given access, with only 11 of them named (including Amazon, Apple, Google, Microsoft, Nvidia, Broadcom, JPMorgan Chase, the Linux Foundation, and Cisco).

Outside of the US, only institutions in England have been given access at this time, and the Bank of England governor warned that Mythos might “crack the whole cyber-risk world open.”

In the days since, AI + cybersecurity has been thrust into the spotlight, with experts from companies around the world sharing their take on Mythos.

Hype? Game changer? Geopolitical lever?

The UK government’s AI Security Institute (AISI) published its own evaluation of Mythos Preview’s capabilities to shed more light on the reality.

They found that Mythos is not a radical departure from recent models in terms of individual cybersecurity capabilities. But where it does show big improvement is in its ability to chain tasks together.

Specifically, Mythos placed in the 85% range for their ‘Apprentice’ level tasks. (For context, GPT-3.5 Turbo struggled to complete any of these, though more recent models like GPT-5.4 and Opus 4.6 have performed within 10% of Mythos.)

Where it showed real breakthrough was in a data extraction test that takes a trained human 20 hours to complete. Mythos became the first AI model ever to complete this, succeeding in 3 of 10 attempts and averaging success in 22 of the 32 steps required.

Here are some of the other key Mythos stories as relates to cybersecurity news:

- For more detail on its reported cybersecurity capabilities, you can read Anthropic’s Red Team report here.

- Project Glasswing is the name given to the security consortium formed around previewing Mythos. Multiple participants have agreed that Mythos is only the start of a coming age where all AI models are sufficiently more capable of cybercrime.

- Mozilla credits Mythos with uncovering 271 vulnerabilities in their latest version of Firefox (now patched by their latest release). In a blog post, CTO Bobby Holley notes that Anthropic’s prior version found 22 vulnerabilities in an older version of Firefox, and that just one such bug would have been a red alert a year ago. But now, finding “so many at once makes you stop to wonder whether it’s even possible to keep up.” Overall, he remains optimistic, writing: “Defenders finally have a chance to win, decisively.”

- As of April 21, Axios reported that CISA still lacked access to Mythos. Its capabilities have likely contributed to Anthropic and the White House holding new meetings in mid-April, which both sides called “productive” as they seek a compromise.

- Matt Sheehan, senior fellow at the Carnegie Endowment for International Peace, told The New York Times that: “For China I think this is the second wake-up call after ChatGPT.” As mentioned above, only limited parties in the US and UK have access, internationally.

- In a statement echoing many in the cybersecurity community, chief technology officer of the cloud security firm Edera told Wired: “I typically am very skeptical of these things, and the open-source community tends to be very skeptical, but I do fundamentally feel like this is a real threat.”

- Anthropic is also investigating reports of unauthorized Mythos access through one of its third-party vendors after a report by Bloomberg.

Other AI Cybersecurity Trends through April 2026

Of course, there’s far more AI in cybersecurity news than Mythos. Some of the other highlights include:

- OpenAI released a model designed for cybersecurity called GPT-5.4-Cyber. It will be more permissive for security-related asks but will only be provided to vetted users.

- Even a month before Mythos and Glasswing, major tech companies had already agreed to share threat intelligence as well as vulnerabilities and frontier capability advancements. One agreement signed formally in March came via the Frontier Model Forum, and included companies like OpenAI, Anthropic, Google, and Anthropic.

- We reported in a recent AI roundup how good AI is at fundraising. It also turns out AI’s quite good at scamming. In April, Wired’s Will Knight detailed a tool by startup Charlemagne Labs enabling users to run varying AI models as attackers or targets for testing purposes. He showed that AI is already good enough to create extremely convincing social engineering attacks. With its capacity at automating research, sycophancy, and generating convincing audio and video fakes, SocialProof CEO Rachel Tobac told Knight: “The kill chain is getting entirely automated.”

- Scam support numbers have been appearing in Google AI Overviews for customer-service numbers for banks, airlines, or other companies. The suspected tactic is publishing fake numbers across scores of web sites alongside a target company. When AI then scrapes and summarizes, this fraudulent information can be substituted for the real in AI answers.

- Adversa AI security firm Co-Founder and CEO Alex Polyakov posted on the surprising depth of AI system vulnerabilities beyond just prompt injection. Unlike a traditional app, he argues AI functions like an organism (as it reasons, remembers, and acts), with attack surfaces that include: goals, prompts, orchestration, tools, model supply chains, memory, APIs, inter-agent trust, and guardrail suppression. Their data finds more than 67% of successful agent compromises happen in these deeper areas.

- Also going beyond just prompt/content injection, Google DeepMind researchers mapped six categories, including: semantic manipulation, cognitive state, behavioral control, systemic, and human-in-the-loop traps. They point out it’s not just the model that must be safeguarded; attackers can manipulate HTML, RAG sources, users themselves, or even outputs to generate bad outcomes.

- Axios reported on AI agents flooding open-source projects with vulnerability reports (many lacking essential details or proving erroneous) at such a scale it’s impossible to keep up. Open Source Security Foundation CTO Christopher Robinson said some projects have gone from a few reports per week to hundreds at a time, while reviews can take maintainers two to eight hours each of unbudgeted time.

Mercor and Other Examples of AI-Related Security Breaches

One of the biggest supply-chain breaches of the period came from the AI data training startup Mercor.

At the end of March, they acknowledged they were compromised through the open source LiteLLM project. LiteLLM is extremely popular, downloaded millions of times a day, and for just 40 minutes it contained credential harvesting malware that did the damage, according to TechCrunch.

The hacking group Lapsus$ has demonstrated stolen data, with the total amount reported at around 4TB. (Note that TeamPCP is the threat group credited with the actual attack on LiteLLM, per below.) The stolen data is believed to include PII from candidate data, employer data, video interviews, source code, ticketing information, and API keys.

In the fallout from the leak, Meta has indefinitely paused contracts with Mercor, and OpenAI is investigating the incident (though as of mid-April remained a client).

Business Insider reported that five of their contractors have also filed lawsuits, with the Wall Street Journal upping this to at least seven class action suits.

In the process, it’s also been alleged that AI compliance startup Delve, which obtained LiteLLM’s security certifications, has faked data and used unscrupulous auditors.

Mercor was on pace to hit over $1 billion in annualized revenue and reportedly handles AI training secrets from every major lab.

Y Combinator President and CEO Garry Tan posted on X that this hack exposed state of the art training data worth billions in value and has created “a major national security issue.”

Other AI-related security episodes from the period included:

- Security startup CodeWall claimed its autonomous agent gained read-write access to McKinsey’s internal AI platform, Lilli, (and their entire production database) in just two hours, after also autonomously selecting it as a target. This without using any credentials, inside information, or human assistance. The company claims it accessed 46.5 million chat messages and over 700,000 files containing client data.

- According to the Cybernews, McKinsey’s security team patched all unauthenticated endpoints, blocked API documentation, and took their dev environment offline the day after disclosure.

- Security researcher Jeremiah Fowler accessed three exposed Sears Home Services databases including 3.7 million records including audio recordings, phone call transcriptions, and text chat log transcriptions. These were tied to the AI assistant Samantha, and included customer data like names, addresses, phones, and service details.

- Apparently not only people are susceptible to social engineering attacks. Northeastern University researchers found OpenClaw agents powered by varying LLMs were prone to panic and could be manipulated into leaking private information, sharing files, or disabling themselves.

- We reported on the Claude Code leak (human err) in our latest AI Roundup. Almost immediately, this code was being rewritten in alternate languages, with bad actors also bundling it with malware in some repositories.

What Are the Other Biggest Cybersecurity Incidents between February and April 2026?

The last of our major events to cover involves Axios—the open-source JavaScript library, not the media outlet—which was compromised when two packages (1.14.1 and 0.30.4) were injected with malicious code that deploys a RAT (remote access trojan).

Compromised devices can use macOS, Linux, and Windows, and the attack follows the pattern of other big supply chain attacks, like LiteLLM (see above) and Trivy (see below) by infecting popular open-source software and using their broad distribution channels to achieve maximum downstream impact.

Axios is one of npm’s most popular HTTP client libraries, with more than 80 million weekly downloads.

Google Threat Intelligence attributed it to state-sponsored North Korean hackers who took advantage of the compromised credentials of the primary maintainer to introduce the malicious packages.

Notable for its restraint, this malware removes itself after executing and even replaces files with clean versions to evade detection. Once the correct RAT for the OS has been deployed, it searches the system and expands, sending data to the target server.

The malicious npm packages have been removed, but CISA released an alert in April urging organizations to monitor repositories, CI/CD pipelines, and developer machines.

If compromised dependencies are found, companies should:

- Roll back to safe versions

- Revoke credentials which may have been exposed

- Rotate secrets

- Monitor for unusual network activity

- Modify installation settings to prevent scripts running on install and to only use packages that are seven or more days old

While the impacts of this attack are just starting to be revealed, Axios’s huge distribution makes this story certain to snowball in the coming months.

StepSecurity’s CTO and Co-Founder Ashish Kurmi said of the attack:

“This is among the most operationally sophisticated supply chain attacks ever documented against a top-10 npm package.”

Wiz reported on the TeamPCP threat group which emerged late in 2025. It is credited with a number of similar open-source supply chain attacks, including both the LiteLLM compromise that hit Mercor (see above), and attacks that injected malware into open-source tools KICS, Telnyx, and the Trivy vulnerability scanner.

Trivy is reportedly embedded in thousands of CI/CD pipelines, and their malware stole data (including CI/CD secrets, cloud credentials, SSH keys, and Kubernetes configuration files) and opened persistent backdoors.

Other Recent Data Breaches and Other Cyber-Attacks

Here’s a brief list of some of the more numerically significant breaches also unveiled in this period:

- The UpGuard Research team reported on their discovery of a mysterious Elastic database containing roughly 3 billion records with email and passwords, and another 2.7 billion records containing Social Security numbers. It remains unclear how much is authentic and newly unveiled.

- ShinyHunters claimed it had stolen some 80 million Rockstar business records using authentication tokens tied to a third-party Anodot incident. These are reportedly more corporate in nature and don’t contain personal information (Reuters).

- The European DIY store chain ManoMano said a third-party compromise leaked data for 38 million customers, exposing names, emails, phone numbers, and customer-service exchanges (SecurityWeek).

- A Salesforce-linked misconfiguration and extortion incident at McGraw Hill led to ShinyHunters releasing data tied to about 13.5 million accounts. These included names, emails, phone numbers, and physical addresses (Bleeping Computer).

- A Dutch telecom Odido breach hit roughly 6 million customers, including highly sensitive data like bank account numbers, birth dates, and passport numbers. Investigations are ongoing but believed to be related to attacks on Salesforce instances with ShinyHunters once again claiming responsibility. This was also followed by a scam class action suit supposedly against Odido (Cybernews).

- A healthcare IT breach at Cognizant TriZetto exposed insurance and patient-related data (PHI) for more than 3.4 million people. Reportedly attackers accessed the systems long before discovery (HIPAA Journal).

Conclusion: How Should Companies Prepare for Evolving Cybersecurity Threats in 2026?

We opened with a hack of thousands of robot vacuums, so it’s only appropriate to finish with hacked crosswalks.

In April, Wired provided more detail on a stunt from last year that saw crosswalk audio messages replaced with satirical statements supposedly from tech executives like Mark Zuckerberg, Elon Musk, and Jeff Bezos. Recently released data revealed not only how numerous cities struggled to respond, but also how undefended these public systems were (and continue to be in many cases).

Investigations into the hackers have gone cold, and many of these systems are shipped with default passwords of “1234.” And despite global reporting on the incident, last month hackers in Denver managed to repeat the stunt on newly installed systems that were still using default passwords.

And while companies can easily change passwords and implement MFA, adopting best security practices and technological solutions in the age of AI requires the right expertise.

If your company is in need of cybersecurity professionals with the skills you need, contact PTP. We love helping organizations stay safe, secure, and compliant.

That concludes our cybersecurity news roundup for Mid-February to April 2026. As ever, stay safe!

References

The DJI Romo robovac had security so poor, this man remotely accessed thousands of them, The Verge

Feds Disrupt IoT Botnets Behind Huge DDoS Attacks, KrebsonSecurity

Mozilla: Anthropic’s Mythos found 271 security vulnerabilities in Firefox 150, Ars Technica

Anthropic’s New A.I. Model Sets Off Global Alarms, The New York Times

What is Mythos, Anthropic’s unreleased AI model, and how worried should we be?, Scientific American

The zero-days are numbered, Mozilla Distilled

US Takes Down Botnets Used in Record-Breaking Cyberattacks, 5 AI Models Tried to Scam Me. Some of Them Were Scary Good, OpenClaw Agents Can Be Guilt-Tripped Into Self-Sabotage, and The Dumbest Hack of the Year Exposed a Very Real Problem, Wired

Anthropic investigating possible breach of its Mythos AI model, CBS News

AI Agent Traps, Google DeepMind SSRN 6372438

AI agents spam the volunteers securing open-source software, Axios

Mercor says it was hit by cyberattack tied to compromise of open source LiteLLM project, and After data breach, $10B valued startup Mercor is having a month, TechCrunch

Red-teamers unleash AI agent on McKinsey’s chatbot, gain full access in two hours, Cybernews

3.7M Records Exposed, Many Belonging to Sears Home Services, Security Magazine

Tracking TeamPCP: Investigating Post-Compromise Attacks Seen in the Wild, Wiz

Two different attackers poisoned popular open source tools – and showed us the future of supply chain compromise, The Register

Axios Supply Chain Attack Pushes Cross-Platform RAT via Compromised npm Account, The Hacker News

Mitigating the Axios npm supply chain compromise, Microsoft Security

Social Insecurity: Billions of Social Security Number and Passwords, UpGuard