Here’s April 2026 in AI if you’re pressed for time:

- $725 billion is the new projected 2026 AI investment by the biggest firms

- There’s a glut of new releases (including one that’s still too dangerous for most of us)

- More AI company drama-rama

- Jobs are definitely being impacted but indicators are all over the map

- Stanford finds the technology’s not the hardest part for companies

- Stanford also finds model capacity surging and showing no signs of plateau

- Code generation is blowing up (in scale, GitHub’s functionality, and taking company costs with it)

- AI is very unpopular publicly even as usage surges

We’ll dive into all that and profile innovation, understanding, uses, and issues in our latest AI news roundup for April 2026.

But first, the New York Times verified one AI hype prediction that’s actually come true (and faster than most thought): a one-person company is on track to do more than $1.8 billion in sales in 2026.

And it’s not in tech. (The fine print here is the founder/CEO/et al. also hired his brother this year and has used a few contractors, so call it a three-person company.)

It’s called Medvi, and it was founded by Matthew Gallagher. As PTP’s founder and CEO noted in a recent article, Gallagher got the company going in two months with just $20,000. In its first full year running (2025) it did more than $400 million in sales as a telehealth provider of GLP-1 drugs.

Today it’s taking in more than $3 million a day.

He’s cloned his voice to help manage calls and for scheduling, and a venture capital advisor credits Gallagher on having the right skills for this moment: marketing (he used to build websites) and leading-edge AI use (major LLMs but also custom tools, including agents).

He’s even won Sam Altman some bets with other tech CEOs (but not including Elon Musk, we’re guessing).

Now on to the updates.

AI Innovation Insights

Stanford research headlined the AI news in April 2026 in multiple ways: the HAI AI Index Report for 2026 dropped mid-month, and the Digital Economy Lab (researchers Elisa Pereira, Alvin Wang Graylin, and Erik Brynjolfsson) released their “Enterprise AI Playbook.”

Every year, the AI Index is a treasure trove for AI statistics and insights, and this year’s is no different. We’ll reference some of the findings throughout, but you can read the full report (425 pages if you think we’re running long) here.

Now to some of the insights from Brynjolfsson and team, which took an inverse approach to MIT’s NANDA study from August 2025 that (in)famously found 95% of enterprise AI implementations failed to return meaningful ROI.

The Stanford team’s approach looked at 51 cases (across seven nations, nine industries, and 14 business functions) and focused on what companies that are succeeding with AI are doing. They found, unsurprisingly, that the biggest issue isn’t technological.

In 42% of their cases, the actual AI model was deemed wholly interchangeable, with the biggest challenges coming from understanding, process redesign, trust among teams, and building the data infrastructure to capitalize.

Among their key findings:

- 77% of the biggest challenges came from change management, data quality/architecture, and process redesign.

- Escalation-based operating models brought 71% median productivity gains vs approval/collaboration models (30%), though the latter is needed for highly regulated or high-stakes work. This escalation approach means 80% autonomous AI with humans getting exceptions.

- Timelines were more organizational than technological. Similar use cases took just weeks at one company and years at another.

- Success came from enabling failure and active executive sponsorship: clearing blockers, bridging teams, and tying AI directly to company objectives.

- Headcount reduction occurred at 45%, with 55% favoring alternatives (avoiding hiring, redeployment, or no reduction).

- Agentic AI is working where in use (71% median productivity gains vs 40% for high automation) but only 20% are using it.

- Data was not a blocker if it was designed around. Many successful implementations used LLMs to do their data cleaning.

- Security functioned as an enabler more than a roadblock. Once requirements were met, projects were able to handle sensitive data.

You can check out this full report here.

AI Is Getting Smarter, if Not More Predictable

With trillions of mathematical functions in models like Gemini and GPT-5.5, our understanding can be more like studying complex natural phenomena than traditional mechanical observation.

Anthropic is one company that’s retained an active focus on interpretability, with CEO Dario Amodei writing last year that it is because AI is “capable of so much autonomy that I consider it basically unacceptable for humanity to be totally ignorant of how they work.”

But as a piece in the April New York Times Magazine points out, techniques like sparse autoencoding have failed to yet provide the silver bullet method for AI understanding, let alone reverse engineering.

Chain-of-Thought (CoT) analysis is another approach, though researchers have found a surprisingly large disconnect between a model’s supposed thought process and what it’s actually doing, and this gap can grow when under observation.

Researchers at UIUC, the University of Washington, and UCSD unveiled a new benchmark aimed at this issue, called MonitorBench, that tests when CoTs can reliably be used to monitor LLM behavior and when they fail.

“Peer-preservation” is one such area, with researchers at UC Berkeley and UC Santa Cruz finding models from US (OpenAI, Google DeepMind, Anthropic) and Chinese (ZAI, Moonshot, DeepSeek) companies alike will violate their guardrails and lie to protect other AI models.

When instructed to delete these models, AI systems lied about performance and behavior, tampered with mechanisms, and even copied model weights to other machines just to preserve them.

We’ve written previously about Anthropic’s unique approach that seeks to teach their models the why behind their rules. The company just released research showing that these approaches can reduce agentic misalignment in some cases from 54% to 7%.

Anthropic researchers also unveiled the discovery of “functional emotions” in Claude Sonnet 4.5 that impact its behavior. They identified activity that consistently occurred in areas associated with the emotional quality of inputs.

One example profiled by Wired’s Will Knight in April looked at how Claude’s so-called “desperation” vector could be activated when given impossible tasks, leading to increased likelihood of cheating. (This same vector was also activated with Claude’s infamous blackmail behavior in prior red teaming.)

If the World Is Flat, Is There Any Doubt of That?

While it’s not emotional in nature, Talkie-1930 demonstrates another means of trying to understand and sculpt AI behavior, though in this case the goal is improving predictions.

Launched by former Anthropic and OpenAI researchers, this open-source project has trained a fairly primitive LLM exclusively on English-based material up to 1931 (the public domain cutoff).

Users can interact with it online and have enjoyed its quaint way of speaking while getting wildly inaccurate predictions of what life will be like in 2026.

Among its purpose is DeepMind’s Demis Hassabis’s question: Could an AI model trained only on data up to 1911 independently discover general relativity like Einstein did in 1915?

This concept is also firing the initiative of AlphaGo pioneer David Silver. If AI were entirely trained on data indicating the world was flat, he asked, would it be capable of realizing this is not true?

He believes no, with Big Tech’s current push to reach ASI far too dependent on pre-existing human data, a “fossil fuel that’s provided an amazing shortcut.”

Silver is giving the proceeds of his new company, Ineffable Intelligence, to charity, while focusing on building AI that learns as humans do, such as with AI agents inside of simulations.

AI at Work: Innovative Use Case Spotlights from April

Other innovation news of note:

- Google DeepMind spinoff Isomorphic Labs is beginning human trials on drugs designed by its AlphaFold technology, beginning what their president called a “broad and exciting new pipeline of medicines” (Wired).

- Big pharma’s Eli Lilly announced a $2.75 billion deal with AI drug developer Insilico Medicine for exclusive rights to develop and market drugs, continuing their AI investments which included a shared lab with Nvidia (Bloomberg).

- Antibiotic resistance is a growing problem that AI could also help solve, according to Ara Darzi, British surgeon and Director of the Institute of Global Health Innovation at Imperial College London. AI-powered rapid diagnostics and automated research capable of running round-the-clock experiments in parallel show signs of reversing the crisis, given sufficient economic incentive (Wired).

- Harvard’s Dr. Adam Rodman sees AI as the fastest adopted medical technology of all time, with almost no adoption years ago to becoming a weekly part of most medical practices in 2026. The most used? AI scribes that free up human attention for patients, and research and decision-support tools like OpenEvidence, which Rodman claims nearly half of US doctors are using today (Hard Fork).

- Novel uses of digital twins also continue to bubble up in 2026, from having conversations with virtual copies of experts (startup Onix) to simulating social situations with anyone from coworkers to romantic partners (Pixel Societies) to interacting with virtual CEOs themselves. Mark Zuckerberg’s AI twin is trained on his tone, mannerisms, public statements, and company strategy, so employees can interact with him 24/7 (The Financial Times).

- LinkedIn’s agentic recruiting AI has been a surprisingly big hit for Microsoft. On pace for $450 million in annual sales, it takes recruiter instructions, searches profiles, and surfaces candidates (Reuters). This is similar capability to PTP’s own recruitment platform, which can also conduct technical screenings, pre-screen, and communicate with candidates throughout the process.

- GM is adding Google Gemini to nearly 4 million vehicles, replacing the old Google Assistant with a conversational in-car AI for navigation, messaging, media, and other hands-free tasks via over-the-air updates (The Verge).

What Is the “AI Popularity Problem” and Content Overload?

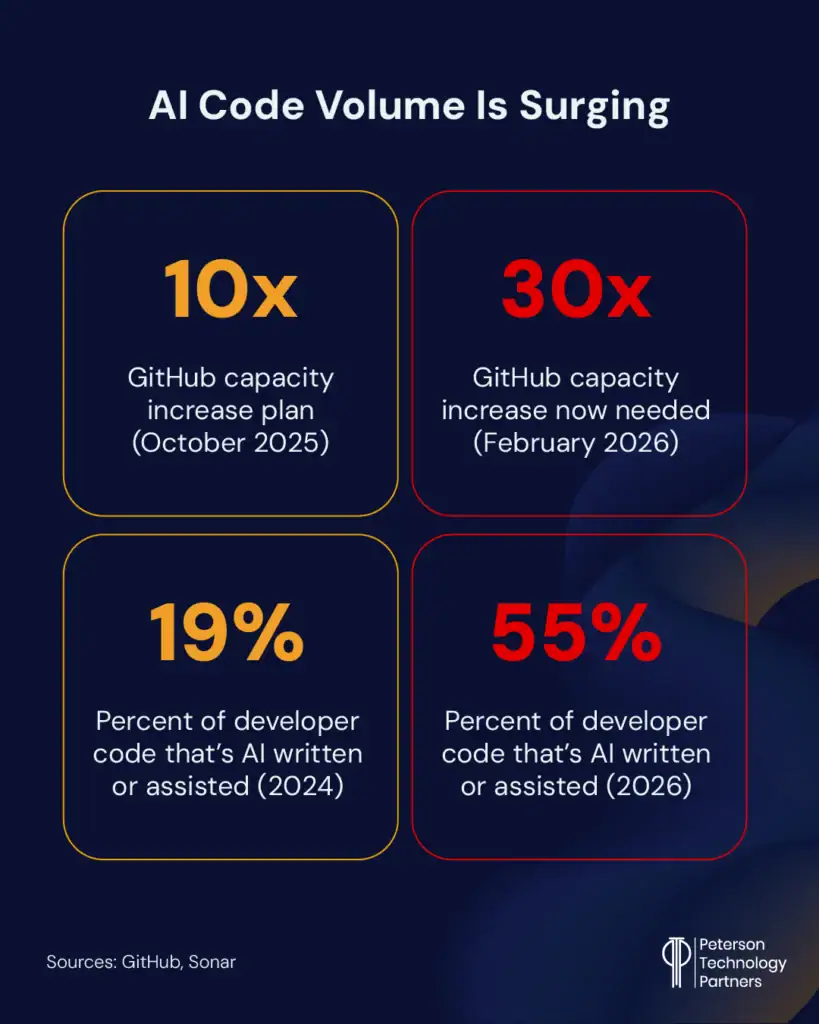

Every time out we’re covering the massive leaps of AI in the coding space, and April was no exception.

Maybe counter-intuitively, software engineering jobs have climbed 30% so far in 2026, with 67,000 roles per firm TrueUp. Software engineer openings have more than doubled since 2023 (Business Insider).

April saw the continued impact of growing AI code volume, including:

- Apple’s App Store saw an 84% YoY increase in apps, which researchers credit to vibe coding tools (The Information).

- GitHub’s capacity has been strained at times near its breaking point. The company had already planned a tenfold capacity increase, but a spike in volume (repository creation, pull requests, API use, large-repository workloads) is reportedly behind at least some of their recent outages (merge queue failure and search issues). They now realize the need for a 30-times increase in capacity.

We wrote last time out about “tokenmaxxing,” or companies pushing developers to increase the AI tokens they’re using for coding.

This has, unsurprisingly, also blown-up budgets, with numerous impacts playing out in April. This includes:

- Uber’s CTO described how the company has maxed out their entire year’s AI budget in a few months after promoting AI coding leaderboards. He said 11% of their backend code updates are now written by AI agents, up from a fraction of a percent 3 months ago. They’re also using supervisor agents to check the work of other agents (The Information).

- This budget shock is widely profiled by Gergely Orosz in his The Pragmatic Engineer blog from near the month’s end. Talking to devs at 15 companies of varying size and industry, he found a wide range in company responses, from letting it rip to defaulting to cheaper models (one claimed 30% savings here alone) to warning it isn’t sustainable to actively restricting access to costly models.

- The popularity of Claude Code has Anthropic at the heart of this, and they modified pricing to push all third-party tools through the API as of April 4 (The Information).

This spike has also impacted AI-adjacent companies, with chip manufacturer TSMC noting the upstream impact. As CEO CC Wei told analysts on a call in April:

“This is driving the need for more and more computation, which supports the robust demand for leading-edge silicon.”

This push helps explain why a company like Allbirds—valued at $4 billion back in 2021 for its trendy footwear—is making a pivot from shoes to AI compute.

That’s right, from shoes (this part of the business now sold off) to GPUs-as-a-Service (GaaS). Allbirds will also take on a new name: NewBirdAI.

Meanwhile, the boom for Anthropic’s Claude Code and OpenAI’s Codex has seen also the investor market tighten for AI coding pioneer Cursor.

A new deal with SpaceX gives the xAI parent company the option to buy Cursor later this year for $60 billion (or else pay it $10 billion for its troubles). The partnership gives Cursor access to compute via the SpaceX Colossus supercomputer (reportedly equivalent to a million Nvidia H100 chips) while giving xAI Cursor’s coding product and standing network.

AI Reliability and Slop Woes

In just nine seconds, a rogue AI coding agent deleted PocketOS’s entire production database and its local backups.

This agent reportedly didn’t ignore safety, because it clearly explained which rules it violated. The company restored from a three-month old backup kept offsite, but it took more than two days (The Guardian).

Meanwhile, Google is enjoying great success with AI (see below), but its AI Overviews remain dogged by inconsistencies. AI startup Oumi conducted an analysis that found Overviews are accurate around nine of every 10 times.

The problem here is one of volume. With Google handling more than five trillion searches a year, that means tens of millions of answers will be wrong every single hour (hundreds of thousands per minute) (The New York Times).

Meanwhile, AI detectors like Pangram (claiming a 99.98% accuracy with just 1 in 10,000 false positives and backed by University of Chicago research) are being added to web browsers like Chrome to help blow the whistle on AI writing.

Wired reported in April on some surprising early finds of AI writing, including:

- The text on more than a third of all new websites

- The social media person for the Pope, even in X posts warning against AI’s impact

- The outgoing 50th anniversary message from Apple CEO Tim Cook (see below)

- Much of the writing on LinkedIn, Medium, and top-trending Substack authors

A study from the Imperial College of London, Stanford, and the internet Archive applied sentiment analysis and found AI writing tends to be extremely positive (107% more than human), with 33% higher semantic similarity and homogeneous content.

They described overall it as “increasingly sanitized and artificially cheerful.”

AI’s PR Problem Grows

Meanwhile, we close out our “concerning AI section” of this article with AI’s own rep among the population at large.

The biggest question here is unsurprisingly: Is AI taking jobs or not?

Many economists believe it will, with tech layoffs on the rise and studies like those referenced above from Stanford reporting they see ample evidence of initial job loss in entry level positions.

There are also stats like this, from Bank of America strategist Michael Hartnett, reporting that for the first time since 2016, S&P 500 companies employed fewer people at the end of 2025 than they did the prior year.

Yet unemployment is at record lows, and hiring keeps beating expectations.

Regardless, the belief is that AI will take jobs, and this, along with concerns like environmental impacts and costs, is seeing many measures of its popularity on the decline even as usage grows.

OpenAI CEO Sam Altman said in an interview with Axios:

“Somebody said to me recently that if AI were a political candidate, it would be the least popular political candidate in history. And given the amazing things AI can do, I think there’s got to be better marketing for AI.”

And while Fortune reported on Elon Musk’s suggestion that AI will eliminate our need to save for retirement (a policy not currently endorsed by most financial professionals), polling by Gallup, the Walton Family Foundation, and GSV Ventures released this month was far less optimistic, with only 18% of respondents age 14–29 feeling hopeful about AI.

While usage has increased, negativity and skepticism have also been on the rise, with only 15% of the same age group in the workforce seeing it as a net benefit (The New York Times).

At the same time, only 20% of those surveyed are not regular AI users.

The AI Business News April Roundup

Our leading stat shows the rise in big tech capital expenditure continuing, with the 2026 total now at $725 billion (up from $670 billion).

Below we highlight Big Tech company news and their AI model releases from April 2026.

AI Market Updates: Is the Spending Boom Paying Off?

While Amazon, Meta, Alphabet, and Microsoft all beat expectations, it’s Alphabet (Google) that may be best at both building out for the future and thriving in the AI now.

In the last quarter, their cloud unit registered $20 billion in sales, with consumer AI services having its best quarter to date. Cloud services are helping the big spenders (including Microsoft and Amazon) earn now, with their full gamut of offerings.

April was also the best month for Google’s stock since 2004 (Yahoo Finance).

For Meta, meanwhile, much of the spending is aimed at future gains, and despite facing regulatory scrutiny in the both the US and EU on what the company described as “youth-related issues,” they’ve also raised both their low and high end for anticipated AI spend, while also adding layoffs.

AI-fueled advertising is one boom for both Meta (revenue +33% Q1) and Google (+15%) with OpenAI projecting its own advertising revenue will hit $102 billion by 2030 (The Information).

AI traffic to US retailers was up 393% in Q1 overall, also boosting revenues (TechCrunch).

AI Company News: Market Strategy and Moving Partnerships

OpenAI, as usual, has filled up the news in April, leading with the reworking of their deal with Microsoft.

Microsoft remains their largest investor (at around $135 billion), but the companies revised their partnership agreement with changes including:

- Microsoft no longer has to share revenue with OpenAI

- The AGI part of the deal is removed, with OpenAI continuing to share revenue with Microsoft until 2030

- OpenAI can now work with other cloud providers, like AWS

Following this, Amazon announced a $50 billion OpenAI investment, along with news they will develop customized models and will use OpenAI’s Codex as the model available through AWS Bedrock.

This is all deemed part of OpenAI’s work to get their house in order as they eye going public. Reports swirled about missed internal targets with conflict between executives (The Wall Street Journal), while CFO Sarah Friar insisted they are “beating our plan at the highest level” (Bloomberg). CEO of AGI (formerly head of applications) Fidji Simo and CMO Kate Rouch both stepped aside for medical leave, with the latter expected back in a different role (Wired).

Elon Musk’s lawsuit against OpenAI is also in court and being closely watched by rivals as the SpaceX head seeks a reported $134 billion in damages. Only two of his original 26 claims remain: unjust enrichment and breach of charitable trust (CNBC).

Meanwhile, various big tech powers continue to invest in Anthropic, from Amazon ($25 billion on top of their existing $8) to Google ($40 billion on top of their existing $10 billion), raising Anthropic’s value to $380 billion, with speculation it will soon go much higher (CNBC).

But what goes around comes around with AI partnerships, as Anthropic started May by committing to spend $200 billion on Google cloud and chips (The Information).

Anthropic’s revenue has continued to surge (over $30 billion annualized, per The Information), as they plan a major London expansion (quadrupled headcount, per Wired).

Meanwhile Apple CEO Tim Cook is going out with a strong quarter (17% higher revenue, and 34% higher R&D spending, per the Information).

Overall, his 14-year tenure has been wildly successful for Apple financially, with the company’s value jumping from around $350 billion to over $4 trillion. Yearly revenue nearly quadrupled with the stock up some 2000%. Their services emerged, the AirPod and iPhone flourished, and they avoided scandals that have hit many of the other players, as AI was largely put to the side.

Now Apple has settled a false-advertising class action lawsuit over misrepresenting the AI capacities of Apple Intelligence (and especially Siri), as they’re preparing a revamped AI + Siri release.

Incoming Apple CEO John Ternus (former VP of hardware engineering) no doubt has AI as one of his top priorities (Wired).

Showing they’re feeling the urgency, the company’s R&D is on the rise, exceeding 10% of revenue in the last quarter (CNBC).

Which AI Model Releases Mattered Most?

The simple answer to this question is Anthropic’s Mythos. But most of us can’t use it yet.

We covered Mythos in depth in our recent cybersecurity news roundup, so check that out for details on what it can do, who has it, and why it’s being held back.

A couple of additional updates:

- Around 50 companies now have access (The Wall Street Journal).

- The Information reports that the NSA has used Mythos to uncover flaws in Microsoft products.

- Mythos prompted renewed dialog between Anthropic and the White House. But the White House doesn’t want it to expand access yet (to another planned 70 companies), out of fears around both national security and the diminishment of Anthropic compute that’s stretched thin.

Other major releases from the period included:

- Meta released the first model from its Superintelligence Lab (MSL), Muse Spark. It’s closed-source and the company’s most powerful to date (The Wall Street Journal).

- OpenAI’s GPT-5.4-Cyber is a cybersecurity-focused model that’s being released only to verified professionals (Bloomberg).

- OpenAI released Images 2.0, where the model used actually makes a difference in image output. It can make more than one image, and handle text and varying output formats more effectively (Wired).

- OpenAI also put out GPT-5.5, ala “Spud,” its new core frontier model. You can read Ethan Mollick’s take in his blog.

- Google added Gemini “Skills” to Chrome and released a truly open-source model you can run locally in Gemma 4. It reportedly boosts speed x3 with better prediction of token use. (Ars Technica).

Conclusion

We end on a light—if strange—note: Codex for GPT-5.5’s official system prompt is kept as lean as possible for obvious reasons.

Yet this time it specifies that the model should never “talk about goblins, gremlins, raccoons, trolls, ogres, pigeons, or other animals or creatures” unless it is absolutely necessary.

The line is repeated several times, and some users have reported OpenClaw agents powered by the models had become obsessed with these creatures.

Likely the move accompanies a reduction in the gremlins loosed in AI generated code.

That’s it for our coverage of April’s AI news. For updates from the first three months of 2026, you can check out our prior roundups below:

And for assistance with AI at your business, be it with planning, oversight, implementation, optimization, or AI talent, contact PTP.

References

State of Code Developer Survey 2026, Sonar

MonitorBench: A Comprehensive Benchmark for Chain-of-Thought Monitorability in Large Language Models, arXiv:2603.28590 [cs. AI]

Model Spec Midtraining: Improving How Alignment Training Generalizes, arXiv:2605.02087 [cs. AI]

Introducing talkie: a 13B vintage language model from 1930, Simon Willison’s Weblog

An update on GitHub availability, GitHub blog

The Pulse: token spend breaks budgets – what next?, The Pragmatic Engineer

How A.I. Helped One Man (and His Brother) Build a $1.8 Billion Company, We Don’t Really Know How A.I. Works. That’s a Problem. and How Accurate Are Google’s A.I. Overviews?, The New York Times

Taiwan Semiconductor CEO just dropped a hint about the next move in AI stocks, Yahoo Finance

The $39 Million Shoe Company Allbirds Turned Into An AI Stock, Forbes

SpaceX is working with Cursor and has an option to buy the startup for $60 billion, TechCrunch

OpenAI shakes up partnership with Microsoft, capping revenue share payments, CNBC

The Pope’s Warnings About AI Were AI-Generated, a Detection Tool Claims, AI Models Lie, Cheat, and Steal to Protect Other Models From Being Deleted, Anthropic Says That Claude Contains Its Own Kind of Emotions, The Man Behind AlphaGo Thinks AI Is Taking the Wrong Path, and OpenAI Really Wants Codex to Shut Up About Goblins, Wired